Google webmaster tools

Introduction

Google Webmaster Tools is a a Google service that allows you to analyze several features of your web sites, e.g. with the idea to do some search engine optimization (SEO). You can find out for example:

- which keywords are used to find your pages and how well these pages show up in a google search

- Top web pages found

- Crawl statistics

- who is linking to your pages (also consider using Yahoo explorer, I like it better for finding links that point to individual pages)

An alternative with different functionalities Google analytics.

If you have a fairly organized web (like this Wiki we hope), these tools are mostly useful for figuring what people are interested in. You then could improve these contents for example. Also, you might consider action to improve visibility of other pages.

If a website is huge and disorganized like our main site, you could use the tool to improve the whole website in several ways. E.g. for tecfa.unige.ch the top search queries we got on march 2009 were: tigre, image, attention, blanche neige, spider bites, unige and saw. All irrelevant :)

Statistics

This section should be updated since the interface changed. For now, I just inserted some quick screenshots. - Daniel K. Schneider 17:59, 10 April 2011 (CEST).

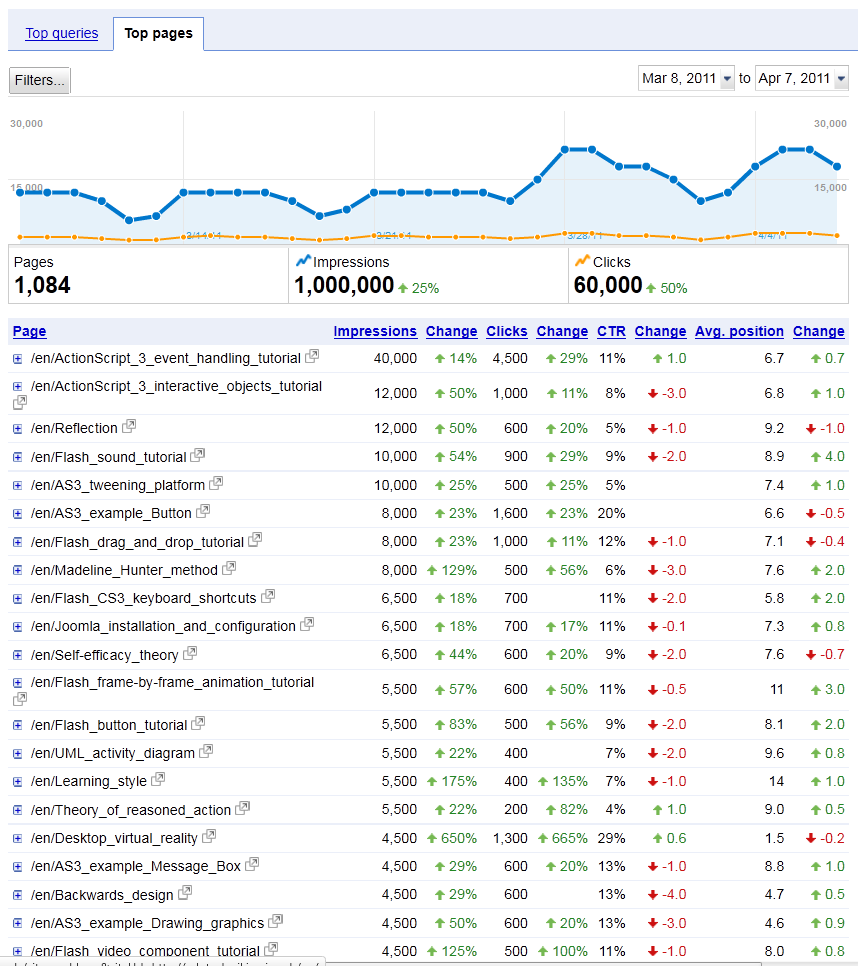

The most useful feature are the search queries, the keywords (that led to your site) and the backlinks from other sites. Below is are screenshots of the April 2011 edition.

Top pages don't get very good click-through rates (CTR) which is normal. These are popular subjects for which better web sites exist.

As soon as we get closer to our core subject (educational technology) we get better click-through rates. Typically between 40 to 60%, unless the search term showed an irrelevant wiki page. E.g. "dublin wiki" gives a high search rank, but the only "dublin" we got in this wiki is "Dublin core" (a metadata standard).

An older version (2009) showed the top 20 google queries that made people land on your website and showed the typical position in displayed results. You can see in the following screen dump which EdutechWiki pages come on top. Most queries are relevant, i.e. people should find what they are looking for. Finally, pages that turn out to me most popular, are not necessarily the ones I am most proud of :).

The column to the left (impressions shows shows the top 20 queries in which edutech wiki appeared. The first item is totally useless (i.e. a wiki template I now removed since it wasn't used).

Of course, Google is not the only search engine and one could argue that certain types of users (e.g. beginners using windows) would use a different engine and look for other other contents. Here is a list of top search key phrases for February 2009 identified by a locally installed web log analyzer. There is quite an important overlap.

Awstats search keyphrases for feb 2009.

self efficacy theory 460 0.6 % incidental learning 445 0.6 % theory of reasoned action 329 0.4 % self-efficacy theory 316 0.4 % backwards design 315 0.4 % as3 drag and drop 238 0.3 % flow theory 195 0.2 % instructional design models 176 0.2 % madeline hunter 152 0.2 % task environment 152 0.2 % learning environment 152 0.2 % human information processing 143 0.1 % learning strategies 135 0.1 % what is pedagogical theory 125 0.1 % flash button tutorial 125 0.1 % experience sampling method 124 0.1 % elgg 118 0.1 % as3 tutorials 117 0.1 % actionscript 3 button tutorial 111 0.1 % programmed instruction 109 0.1 %

Notes: * I removed three entries that concern the french version. Top request in french was for "constructivisme" and which also shows up as the top query in the google webmaster tools.

18% constructivisme 3

- Also the percentages are not the same since (a) our own web log analyzer analyses other partitions of edutechwiki.unige.ch and more importantly, it includes all the pages that webrobots should not index.

The Google webmaster tool only shows the top 20 searches queries, which is a real pity. Showing some extra dozens of queries really would be helpful.

You also can consult crawl statistics, e.g. your overall page rank. Most pages in edutechwiki get a low one, which is not a surprise. Edutech is not that popular.

What Googlebot sees is another interesting feature. E.g. it will show words and phrases with different variations lumped together and that show up in external links.

Finally, subscriber stats will show the number of users who have subscribed to RSS feeds.

Pages with external links

This table provides a list of pages on edutechwiki that have links pointing to them from other sites. These backwards links are often hugely overestimated, since some people add a stable link in their blogs, i.e. the link will show up in each blog page. E.g. on march 2005 the main page is linked about 2500 times but dozens of times by the same service.

You also can analyze internal links. That may be a very useful feature for other websites, but that's something a wiki can do by itself.

Downloads

One can download data files for both statistics and external links in csv format to do some further analysis. One interesting feature is that you will get statics from from some different google national sites (e.g. google.com, google, ch

Some data in the file are really weird. I do get the statistics for a website like edutechwiki.unige.ch/en but also for 3-4 irrelevant ones like edutechwiki.unige.ch/en/4c

Malware and removal tools

Malware alerts:

“If Google detects that your site has been compromised, we'll tell you about it in Webmaster Tools (to ensure that you're notified quickly, you can have your Message Center messages forwarded to your email account). If the hacker inserted malware into your site, we'll also identify your site as infected in our search results to protect other users.” (Cleaning your site, retrieved 17:42, 29 September 2010 (CEST))

Removals from the cache:

Once you blocked or remove a resources from your site, you then can request that the page should be removed from Google's cache.

- My Removal Requests is a page that allows you to submit a request and then track removal status. This can be useful, if you don't want to wait for Google to crawl your site again.

Content Analysis

In the Diagnostics section you will find the Content Analysis tool. It will show pages with duplicated title tags seen from the server root. E.g. for "Document Object Model - EduTech Wiki we have:

- /en/DOM

- /en/Document_Object_Model

- /fr/Document_Object_Model

Nothing to worry about. There is one redirection and both an English and French version :) Most duplicates shown fall in these two categories.

Using the service

A quick howto

Using Google webmaster tools is fairly easy, if you have a Google account and control over your website.

- Sign into Google Webmaster Tools with a Google Account.

- Add your site then "verify"

- Add either a meta tag to all pages or (better) add an HTML file to the root directory. The file is empty, but it's name is provided by Google.

In addition to using webmasters tools website, you also can look at at some data from iGoogle by installing a few widgets. You find them in the Tools section. However, you will get less information.

Alternatives

See search engine optimization

Links

- At Google

- Google Google Webmaster Central

- Google Webmaster Central blog

- Search Engine Optimization (SEO) at Google webmaster Central

- Other

- Website Traffic Analysis Tool - Google Webmaster Tools (A short review of its features).

- Google Webmaster Tools - Key Website Analysis and Essentials (an other short feature review).

- Setting Up Google Webmaster Tools and Google Webmaster Tools Dashboard and more ...

- Analysis tools

- LinkingToMe, LinkingToMe summarizes the CSV files available from Google Webmaster Tools to produce an HTML report showing how other sites are linking to your site. (not tested).

- I wonder if there are any publications that show how to do multi-variate statistical analysis of the csv files ...

....