Repertory grid technique

This article or section is currently under construction

In principle, someone is working on it and there should be a better version in a not so distant future.

If you want to modify this page, please discuss it with the person working on it (see the "history")

<pageby nominor="false" comments="false"/>

Definitions

The repertory grid technique (RGT or RepGrid) is a method for eliciting personal constructs. It is based on George Kelly's Personal Construct Theory in 1955 and was also initially developed within this context. As methodology, it can be used in a variety fundamental and applied research projects on human constructs. A particular strength of the repertory grid technique is that it allows the elicitation of perceptions without researcher interference or bias. (Whyte & Bytheway, 1996).

This piece might be used as an introduction to RepGrid methodology if the reader is interested at some "under the hood" and background issues. Otherwise, if you just need a short executive summary, check the links section.

Repertory grid analysis is also popular outside academia e.g. in counseling and marketing. Today, various variants of the global concept seem to exist, some more complex than others. According to Slater, 1976 cited by Dillon (1994:76), its use as analytic tool does not require acceptance of the model of man which Kelly proposed. Also within "main stream" RGT, several kinds of elicitation methods to extract constructs and to analyse them exist. A common way to describe the technique is identifying a set of "elements" (a set of "observations" from a universe of discourse) which are rated according to certain criteria termed "constructs". “The elements and/or the constructs may be elicited from the subject or provided by the experimenter depending on the purpose of the investigation. Regardless of the method, the basic output is a grid in the form of n rows and m columns, which record a subject's ratings, usually on a 5- or 7-point scale, of m elements in terms of n constructs”. (Dillon, 1994:76

One reason, why repertory grid technique is popular is that “have three major advantages over other quantitative and qualitative techniques. These advantages are the ability to determine the relationship between constructs, ease of use, and the absence of researcher bias. Repertory grids allow for the precise defining of concepts and the relationship between these concepts.” (Boyle, 2005).

The RepGrid technique is best used when participants have practical experience with the studied domain. E.g. they must be able to identify representative elements and be able to compare them through a set of (their own) criteria. E.g. doing grid analysis with teachers about educational modelling languages might not be a good idea, but it could be done with researchers and power users that are familiar with recent advances in learning design. This also implies that RepGrid works best when concrete, practical examples exist. E.g. this would be the case for various tools that support on-line learning LMS, portalware, etc.)

- Some other definitions of RGT (emphasized text by DKS).

“The RGT (Kelly, 1955) originally stems from the psychological study of personality (see Banister et al., 1994; Fransella & Bannister, 1977, for an overview). Kelly assumed that the meaning we attach to events or objects defines our subjective reality, and thereby the way we interact with our environment. The idiosyncratic views of individuals, that is, the different ways of seeing, and the differences to other individuals define unique personalities. It is stated that our view of the objects (persons, events) we interact with is made up of a collection of similarity–difference dimensions, referred t@@@o as personal constructs. For example, if we perceive two cars as being different, we may come up with the personal construct fancy–conservative to differentiate them. On one hand, this personal construct tells something about the person who uses it, namely his or her perceptions and concerns. On the other hand, it also reveals information about the cars, that is, their attributes.” (Hassenzahl & Wessler, 2000:444)

“[..]The “Repertory Grid” [...] is an amazingly ingenious and simple ideographic device to explore how people experience their world. It is a table in which, apart from the outer two columns, the other columns are headed by the names of objects or people (traditionally up to 21 of them). These names are also written on cards, which the tester shows to the subject in groups of three, always asking the same question: “How are two of these similar and the third one different?” [...] The answer constitutes a “construct”, one of the dimensions along which the subject divides up her or his world. There are conventions for keeping track of the constructs. When the grid is complete, there are several ways of rating or ranking all of the elements against all the constructs, so as to permit sophisticated analysis of core constructs and underlying factors (see Bannister and Mair, 1968) and of course there are programs which will do this for you.” (Personal Construct Psychology, retrieved 14:09, 26 January 2009 (UTC).)

“The Repertory Grid is an instrument designed to capture the dimensions and structure of personal meaning. Its aim is to describe the ways in which people give meaning to their experience in their own terms. It is not so much a test in the conventional sense of the word as a structured interview designed to make those constructs with which persons organise their world more explicit. The way in which we get to know and interpret our milieu, our understanding of ourselves and others, is guided by an implicit theory which is the result of conclusions drawn from our experiences. The repertory grid, in its many forms, is a method used to explore the structure and content of these implicit theories/personal meanings through which we perceive and act in our day-to-day existence.” (A manual for the repertory grid, retrieved 12:18, 26 January 2009 (UTC)).

“The term repertory derives, of course, from repertoire - the repertoire of constructs which the person had developed. Because constructs represent some form of judgment or evaluation, by definition they are scalar: that is, the concept good can only exist in contrast to the concept bad, the concept gentle can only exist as a contrast to the concept harsh. Any evaluation we make - when we describe a car as sporty, or a politician as right-wing, or a sore toe as painful - could reasonably be answered with the question 'Compared with what?' The process of taking three elements and asking for two of them to be paired in contrast with the third is the most efficient way in which the two poles of the construct can be elicited.”. (Enquire Within, Kelly's Theory Summarised), retrieved 12:18, 26 January 2009 (UTC).

“The repertory grid technique is used in many fields for eliciting and analysing knowledge and for self-help and counselling purposes.” (Repertory Grid Technique, retrieved 12:18, 26 January 2009 (UTC).)

Overview

Most repertory grid analyses use the following principle:

- The designer has to select a series of elements that are representative of a topic. E.g. to analyze perception of teaching styles, the elements would be teachers. To analyze learning materials, the elements could be learning objects. To analyze perception of laptop functionalities, the elements are various laptop models. For the various kinds of knowledge elicitation interviews (as described below), often cards are used. E.g. the element names (and maybe some extra information such as a picture) are shown to the participants.

- The next step is knowledge elicitation of personal constructs. To understand how an individual perceives (understands/compares) these elements, scalar constructs about these elements then have to be elicitated. E.g. using the so-called triadic method, interviewed people will have to compare learning object A with B and C and then state in what regards they are being different. E.g. Pick the two teachers that are most similar and tell me why. then tell me how the third one is different. The output will be contrasted attributes (e.g. motivating vs. boring or organized vs. a mess). This procedure should be repeated until no more new constructs (words) come up.

- These constructs are then reused to rate all the elements in a matrix (rating grid), usually on a simple five or seven point scale. A construct always has two poles, i.e. attribute pairs with two opposites.

- Individual grids are then analysed with multivariate statistical procedures such as two-way cluster analysis or principal component component analysis.

- In addition, there exist methods to compare individual grids or to construct "common grids", e.g. for a group of experts or an even larger population. In the latter case, we can't call these "personal constructs" anymore.

- Additional quotations

The perception of repertory grid analysis is not the same everywhere. Below a few quotes:

According to Feixas and Alvarez, the repertory grid is applied in four basic steps: (1) The design phase is where the parameters that define the area of application are set out. (2) In the administration phase, the type of structured interview for grid elicitation and the resulting numerical matrix is defined. (3) The repertory grid data can then subjected to a variety of mathematical analyzes. (4) The structural characteristics of the construct system can then be described.

“The elements selected for the grid depend on which aspects of the interviewee's construing are to be evaluated. Elements can be elicited by either asking for role relations (e.g., your mother, employer, best friend) or by focusing on a particular area of interest. A market research study might, for example, use products representative of that market as elements (e.g., cleaning products, models of cars, etc.).”(Design Phase)

“The type of rating method used (dichotomous, ordinal or interval) determines the type of mathematical analysis to be carried out as well as the the length and duration of the test administration. As before, the criteria for selection depend on the researcher's objectives and on the capacities of the person to be assessed.” (Design Phase (2))

According to Nick Milton (Repertory Grid Technique) the repertory grid technique includes four main stages.

- In stage 1, elements to analyze (e.g. concepts or observable items such as a pedagogical designs or roles) are selected for the grid. A similar number of attributes that allow to characterize each element are also defined. These attributes should either be generated with an elicitation method or can be taken from previously elicitated knowledge.

- In stage 2 each concept must be rated against each attribute.

- In stage 3, a cluster analysis is performed on both the elements and the attributes. This will show similarities between elements or attributes.

- In stage 4, the knowledge engineer walks the expert through the focus grid gaining feedback and prompting for knowledge concerning the groupings and correlations shown.

Elicitation methods

Elicitation methods can vary. The basic procedures we identified from the literature are: monadic, dyadic, triadic, none, or full context form.

- In the monadic procedure, participants must describe an element with a single word or a short phrase. The the opposite of this term is asked.

- In the dyadic procedure, the participant is asked to look at pairs of elements and tell if they are similar or dissimilar in some way. If they are judged dissimilar, he has to explain how, again with a single word or a short phrase and again also tell the opposite of this term. If they are judged similar, then he is asked to select a third and dissimilar element and then again explain similarities and dissimilarities with simple phrases.

- The triadic procedure has been defined above, i.e. participants are given three elements, must identify two similar and a different one and then explain. The elements in each triad are usually randomly selected and then replaced for the next iteration.

- None: In some studies (in particular applied areas such marketing studies), the researcher may provide the constructs.

- In the full context form technique (Tan & Hunter, 2002), “the research participant is required to sort the whole pool of elements into any number of discrete piles based on whatever similarity criteria chosen by the research participant. After the sorting, the research participant will be asked to provide a descriptive title for each pile of elements. This approach is primarily used to elicit the similarity judgments.” (Siau, 2007: 5).

- Group construct elicitation is according to Siau (2007) similar to the triadic sort method. Both element identification and construct elicitation with triadic sort are done together through discussion.

- Aggregation. Idiographic (individual) repertory grids can be aggregated and in a second phase use as nomothetic (more positivistic) research instruments. E.g. a synthetic grid can be constructed from individuals by coders and analysed as such or it can be used to collect rankings again (as in the "none" version.

The knowledge elicitation procedure can be stopped when the participant stops coming up with new constructs.

Phrases that emerge for similarities are called the similarity pole (also called emergent pole. The opposing pole is called contrast pole or implicit pole. Numerical scale then should be consistent, e.g. the emergent poles always must have either a high or a low score. Certain software can require a direction.

Ranking/rating of elements in a matrix also can be done with various procedures. Examples:

- Rating: Participants must judge each element on a Likert-type scale, usually with five or seven points. E.g. Please rate yourself on the following scale or Please rate the comfort of this car model.

- Ranking: Participants can be asked to rank each element with respect to a given construct. E.g. rank 10 learning management systems in terms of "easy to use - difficult to use". A system like Dokeos would rank higher than a system like WebCT.

- Binary ranking: Yes/no with respect to the emergent (positive) pole

- Additional information about elicitation methods.

Below, a few quotes we found in our initial explorations:

Feixas and Alvarez outline the three methods to elicit constructs like this:

A) Elicitation of constructs using triads of elements. This is the original method used by Kelly. It involves the presentation of three elements followed by the question, "How are two of these elements similar, and thereby different from a third element?" and then "How is the third element different from the other two?" [...] B) Elicitation of constructs using dyads of elements. Epting, Schuman and Nickeson (1971) argue that more explicit contrast poles can be obtained using only two elements at a time. This procedure usually involves an initial question such as, "Do you see these people as more similar or different?" This prompt can then be followed by questions of similarity such as, "How are these two elements alike?" or "What characteristics do these two elements share?" Questions referring to differences such as "How are these two elements different?" are also appropriate. [...]

C) Elicitation of constructs using single elements. Also known as monadic elicitation, this way of obtaining constructs is the most similar to an informal conversation. It consists in asking subjects to describe in their own words the "personality" or way of being of each of the elements presented. The interviewer's task is limited to writing down the constructs as they appear and then asking for the opposite poles.Stewart and Stewart (1981) cited by Todd A. Boyle (2005) recommend a seven-step approach for administrating a repertory grid:

- Decide the purpose (e.g. why, for whom, and with what expected action), mode (e.g. interviewer-guided, interactive, interviewee-guided, shared amongst a group), and analysis (e.g. computer or manual, quantitative or qualitative) for the study.

- Choose elements by interviewer nomination (i.e. the interviewer stating that they want the interviewees views of specific elements only), interview elicitation (i.e. questioning the interviewee to get a spread of elements over the available range), or interviewee nomination of elements.

- Elicit the constructs by presenting the elements three at a time with questions such as “In what way are two of these similar to each other and different from the third?” or “Tell me something that two of these have in common which makes them different from the third?” For specific elements, refine the question such as “Tell me something about two of these people that make them different from the third in the way they go about their job?”

- Obtain any high order constructs by laddering.

- Turn each construct into a five-point scale.

- It is not necessary to exhaust all the possible constructs by triadic comparison before going onto a full grid. Many people find that the best way is to elicit a few constructs and put them into the grid and then produce more constructs; this makes the procedure more obvious to the interviewee.

- The grid is now ready for discussion, sharing, or analysis.

Construction of repertory grid tables

An example

The following example was taken from Sarah J. Stein, Campbell J. McRobbie and Ian Ginns (2000) research on Preservice Primary Teachers' Thinking about Technology and Technology Education. We only will show parts of the tables (in order to avoid copyright problems).

“Following a process developed by Shapiro (1996), a Repertory Grid reflecting the views of the interviewed group about the technology design process was developed. The interview and survey responses were coded and categorised into a set of dipolar constructs (ten) consisting of terms and phrases commonly used by students about technology and the conduct of technology investigations (Table 1), and a set of elements (nine) of the technology process consisting of typical situations or experiences in the conduct of an investigation (Table 2). The Repertory Grid developed consisted of a seven point rating scale situated between pole positions on the individual constructs, one set for each element. A sample Repertory grid chart is shown in Table 3.”

- Table 1

- Repertory Grid - Constructs

| Label | Descriptor - One pole | Descriptor - Opposite pole |

| a. | Creating my own ideas | Just following directions |

| b. | Challenging, problematic, troublesome | Easy, simple |

| c. | Have some idea beforehand about the result | Have no idea what will result |

| d. | ... | ... |

- Table 2

- Repertory Grid - Elements

| Label | Descriptor |

| 1. | Selection of a problem for investigation by the participant |

| 2. | Identifying and exploring factors which may affect the outcome of the project |

| 3 | Decisions about materials and equipment may be needed |

| 4. | Drawing of plans may be involved |

| 5. | Building models and testing them may be required |

- Table 3

- Sample Repertory Grid Chart

| The following statement is a brief description of a typical experience you, as a participant, might have while conducting a design and technology project. |

| ELEMENT #1: Selection of a problem for investigation by the participant. |

| Rate this experience on the scale of 1 to 7 below for the following constructs, or terms and phrases, you may use when describing the steps in conducting a design and technology project. CIRCLE YOUR RESPONSE. |

| a. | Creating my own ideas | 1 2 3 4 5 6 7 | a. | Just following directions |

| b. | Challenging, problematic, troublesome | 1 2 3 4 5 6 7 | b. | Easy, simple |

| c. | Have some idea beforehand about the result | 1 2 3 4 5 6 7 | c. | Have no idea what will result |

| d. | Using the imagination or spontaneous ideas | 1 2 3 4 5 6 7 | d. | Recipe-like prescriptive work |

All-in-one grids

Instead of presenting a new grid table for each element, one also could present participants a grid that includes the elements as a row. In this case, users have to insert numbers in the cells. This is difficult on paper, but a bit easier with a computer interface we believe.

However, if you have few elements, a paper version can be done easily. E.g. to analyse perception of different teachers, e.g. Steinkuehler and Derry's Repertory Grid tutorial provides the following example about teacher rating.

| Similarity or Emergent Pole 1 | Elements | Contrast Pole 5 | |||||

| Prof. Apple | Prof. Bean | Prof. Carmel | Prof. Dim | Prof. Enuf | Prof. Fly | ||

| approachable | 1 | 1 | 5 | 4 | 5 | 1 | intimidating |

| laid-back | 3 | 3 | 1 | 1 | 1 | 1 | task-master |

| challenging | 4 | 2 | 3 | 1 | 2 | 5 | unengaging |

| spontaneous lecturer | ... | scripted lecturer | |||||

| etc. | etc. | ||||||

| Two poles – the similarity or emergent pole and the contrast pole – are listed in columns at either end. Elements (in the middle columns) are rated in terms of the extent to which they belong to either of the poles of a construct. The ratings are placed in a row of the cells between the corresponding poles. The red dots indicate the elements used in each triad. | |||||||

Analysis techniques

Individual grids can be analyzed using various statistical data reduction techniques on both rows and columns. The most popular techniques seem to be cluster analysis and factor analysis (principal component analysis with factor scores for elements computed). Daniel K. Schneider imagines that one also could use correspondence analysis if scales used ordinal or nominal.

Boyle (2005:184) lists some data analysis strategies:

- Frequency counts

- E.g. “Count the number of times particular elements or particular constructs are mentioned. Frequency counts are most often used to find common trends from a sample of individuals”.

- Content analysis

- “Select a series of categories into which elements or constructs fall and then assign the elements or constructs to categories”. We rather suggest to reflect on coding methodology first, e.g. categories also could emerge. Qualitative data analysis techniques could be useful to reduce elements and constructs elicitated from several interviews. E.g. it could be used to define one or more common lists of elements and constructs that then can be administered in a standard way to a larger population. Or be used for "group elicitation".

- Visual focusing

- “The use of a check/cross system instead of a scale. Elements are compared for common checks or crosses.” An related alternative seems to be software that colorizes values in the two-way table according to some thresholds.

- Statistical analysis

- “Examples include cluster analysis and principal component analysis” (see below)

- Combination of techniques

- “Using a combination of the above data analysis techniques in the same study”

Simple descriptive statistics and visualizations

A simple descriptive technique to look at multiple grids that use the same constructs (e.g. as in some marketing research or knowledge engineering) is to simply chart the values for each participant as graph between the poles (opposite attributes). Otherwise, with grids that differ between individuals, it gets more complicated ...

Visual focusing

Visual focusing allows to identify:

- Elements loaded with more or less emergent (or implicit poles) of constructs or, e.g. identify the "best" software according to constructs used.

- Constructs that are found to be mostly emergent or implicit, e.g. identify "constructs" with positive poles that don't show up, e.g. something that almost no software could do.

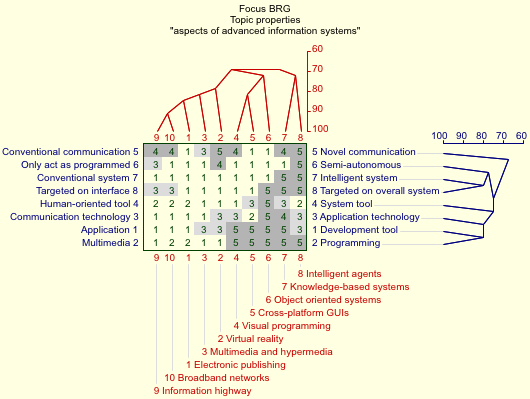

Here is an example generated with WebGrid III (generated/retrieved 16:49, 13 February 2009 (UTC)). It concerns Topics (elements) and aspects (constructs) of advanced information systems. Daniel K. Schneider took the data that came with example, i.e. we don't know who filled them in. If you (reader) don't agree with the grid (elements, constructs and ratings) you can go to the system and change any of these. There purpose here is not to discuss "advanced information systems" but to present an analysis technique ...

The simple grid data look like this and it is not very readable.

Context: aspects of advanced information systems, 10 topics, 8 properties

* 1 2 3 4 5 6 7 8 9 10 *

*********************************

Development tool 1 * 5 3 3 1 1 1 1 3 5 5 * 1 Application

Multimedia 2 * 2 1 1 5 5 5 5 5 1 2 * 2 Programming

Communication technology 3 * 1 3 1 3 2 5 4 3 1 1 * 3 Application technology

Human-oriented tool 4 * 2 1 1 1 3 5 3 2 2 2 * 4 System tool

Conventional communication 5 * 1 5 3 4 1 1 4 5 4 4 * 5 Novel communication

Only act as programmed 6 * 1 4 1 1 1 1 1 5 3 1 * 6 Semi-autonomous

Conventional system 7 * 1 1 1 1 1 1 5 5 1 1 * 7input your own. Intelligent system

Targeted on interface 8 * 1 1 1 1 1 5 5 5 3 3 * 8 Targeted on overall system

*********************************

* * * * * * * * * Broadband networks

* * * * * * * * Information highway

* * * * * * * Intelligent agents

* * * * * * Knowledge-based systems

* * * * * Object oriented systems

* * * * Cross-platform GUIs

* * * Visual programming

* * Multimedia and hypermedia

* Virtual reality

Electronic publishing

A picture with color codes generated by the system looks like that and allows to quickly identify high "loadings" of emergent poles (which are to the right). I.e. "5" in the first row means "totally application".

Cluster analysis

We found that hierarchical cluster analysis seemed to be most popular. Typically, two-way clustering (co-clustering or biclustering) is done. I.e. both elements and constructs are clustered and the sorted according to proximity. Then a dendogram can be drawn on top (elements) and to the right (constructs) of the repertory grid. Data assumptions for cluster analysis are less strict than for factor analysis. However, there exist many variants of cluster analysis and Boyle is wrong when he quotes Stewart and Steward (1981) that "it uses non-parametric statistics" and "makes no assumptions about the absolute size of the difference". Most analysis variants make such assumptions. However, there exists variants that can deal with ordinal and even nominal data. Let's work through the example from the Web Grid III system that we introduced above in the "visual focusing" section and let's recall that these are not our data.

The WebGrid cluster analysis algorithm is based on the FOCUS algorithm (Shaw, 1980). It uses distance measures to reorder the grid, placing similarly rated constructs/elements next to each other. This is kind of two-way hierarchical cluster analysis for both elements and constructs. The grid is rearranged to place similarly rated constructs/elements next to each other and a dendogram is shown for each axis. This algorithm is available through the WebGrid III and the more recent WebGrid IV online systems.

The clustering of an example repertory grid on advanced information systems and their aspects (constructs about them) was generated from the WebGrid-III demo page (without modifying anything and retrieved 16:49, 13 February 2009 (UTC).

:

If you compare this picture with the picture in the visual focusing section, you can see that (1) it includes dendograms for both elements and constructs and that (2) elements and constructs have been rearranged so that the dendograms show proximities between items.

Now we can identify types (clusters), e.g. we can see that visual programming and cross-platform GUIs are close, that knowledge based systems and intelligent agents are fairly different from each other but even more different from all rest. Distances between items are measured in terms of horizontal distances in the dendogram. E.g. the element 9 "Information highway" is closely associated (91%) with the element 10 "broadband networks". WebGrid III allows to generate a little table showing element matches in terms of percent. This is useful if you are interested in quoting more precise information than the one you can see in the "red" dendogram.

* 1 2 3 4 5 6 7 8 9 10 ****************************************** 1 * 100 59 81 59 72 44 31 28 75 84 2 * 59 100 78 69 50 28 34 56 72 62 3 * 81 78 100 72 66 38 38 34 75 78 4 * 59 69 72 100 81 59 66 50 53 62 5 * 72 50 66 81 100 72 59 38 47 56 6 * 44 28 38 59 72 100 69 41 31 41 7 * 31 34 38 66 59 69 100 72 38 47 8 * 28 56 34 50 38 41 72 100 47 44 9 * 75 72 75 53 47 31 38 47 100 91 10 * 84 62 78 62 56 41 47 44 91 100

The same can be done with construct matches, e.g. construct 1 ("application") is very close (80%) to construct 2 ("multimedia").

* 1 2 3 4 5 6 7 8 ********************************** 1 * 30 20 40 45 60 62 45 45 2 * 80 10 70 70 45 43 65 70 3 * 80 30 40 75 55 62 70 70 4 * 60 40 40 40 40 57 65 75 5 * 50 60 55 75 30 67 60 60 6 * 43 57 43 47 38 15 77 68 7 * 55 35 30 35 40 22 0 80 8 * 55 35 30 35 50 43 20 20

In our opinion, there are two main advantages of cluster analysis:

- It is simple to understand as compared to factor analysis

- It allows to compare grids having only the same elements (comparing different types of elements) or the the same constructs (comparing different clustering of same constructs).

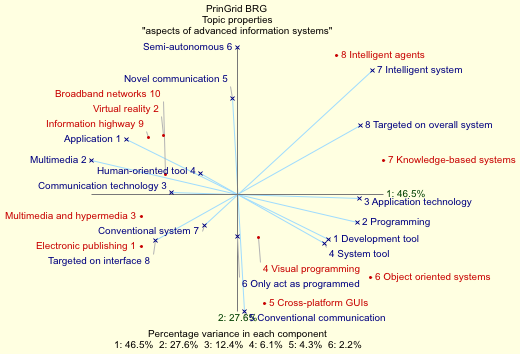

Factor analysis

In order to discuss factor analysis we take again the same "WebGrid" example discussed in the "cluster analysis" section above, but this time we use the WebGrid IV system (which as of feb 2009) is still experimental.

The cluster analysis generates a prettier picture but is the same (emergent poles to the right).

The following picture shows a plot of the two most important factors.

Below are tables with loadings of the topics (elements) and loading of the properties (constructs).

Loadings of the Topics

* 1 2 3 4 5 6

***************************************

1 * -1.54 -0.85 0.73 0.46 0.50 0.23 Electronic publishing

2 * -1.19 0.96 -0.96 -1.01 -0.03 0.28 Virtual reality

3 * -1.55 -0.36 -0.28 0.25 -0.37 0.43 Multimedia and hypermedia

4 * 0.34 -0.70 -1.50 0.17 -0.23 -0.51 Visual programming

5 * 0.45 -1.75 -0.45 0.23 0.66 0.01 Cross-platform GUIs

6 * 2.14 -1.34 0.96 -0.97 -0.13 -0.05 Object oriented systems

7 * 2.35 0.55 0.05 0.62 -0.64 0.41 Knowledge-based systems

8 * 1.59 2.24 -0.02 0.21 0.73 -0.10 Intelligent agents

9 * -1.43 0.92 0.72 -0.29 0.00 -0.17 Information highway

10 * -1.15 0.33 0.75 0.33 -0.50 -0.52 Broadband networks

Loadings of the Properties

* 1 2 3 4 5 6

***************************************

1 * -1.98 0.99 1.28 0.27 0.24 -0.31 Development tool--Application

2 * 2.61 -0.61 -0.48 0.53 0.54 -0.63 Multimedia--Programming

3 * 1.85 -0.06 -0.29 -0.89 -0.29 0.18 Communication technology--Application technology

4 * 1.22 -0.70 1.07 -0.43 0.09 0.03 Human-oriented tool--System tool

5 * -0.13 2.10 -0.90 -0.05 -0.70 -0.39 Conventional communication--Novel communication

6 * -0.01 1.87 -0.31 -0.80 0.99 0.04 Only act as programmed--Semi-autonomous

7 * 1.66 1.53 0.02 0.97 0.13 0.59 Conventional system--Intelligent system

8 * 2.02 1.13 1.41 -0.14 -0.39 -0.18 Targeted on interface--Targeted on overall system

The three most important factor explain 86.5% of the variance. Factor one explains 46.5%, factor two 27.6% and factor three 12.4%. A first analytical task now is to label these factors.

- Factor one strongly loads Multimedia--Programming and a bit less Development tool--Application (negatively), Communication technology--Application technology and Targeted on interface--Targeted on overall system. Some of these variables are also correlated with factor 2. We could call this factor user focused -- tool oriented.

- Factor two strongly loads Only act as programmed--Semi-autonomous, Conventional communication--Novel communication as well as Conventional system--Intelligent system. We could this factor smart/networked system -- dumb/isolated system.

- Factor three is more difficult to interpret.

We get similar results as in the cluster analysis, except for "novel communication" that shows as really distinct variable there.

If we now look at the elements (the topics), we can see that they all seem to score on two factors (i.e. we don't have any elements that sits on an axis). Results could be interpreted as follows. We find four different types of systems:

- User focused & smart/networked systems: Broadband networks, Virtual reality, Information highway

- User focused & dumb/isolated systems: Multimedia and hypermedia, Electronic publishing

- Tool oriented & smart/network systems: Intelligent agents and knowledge-based systems

- Tool oriented & dumb/isolated systems: Visual programming, object-oriented systems and cross-platform GUIs.

However, keep in mind that one show not interpret a factor analysis and talk about types of elements or constructs in the same as in the cluster analysis. The plot only shows elements in terms of two factors ! Indeed we find for example that broadband networks and information highway are very close in this plot, but so are virtual reality but not electronic publishing as in the cluster analysis.

The main advantage of principal component analysis are in Daniel K. Schneider's opinion the following:

- We can display both elements and constructs in the same graphic.

- We can extract yet a new layer of data reduction, e.g. the two (or more) principal factors of constructs.

Disclaimer/reminder: This discussion was made with a grid that already was filled in, i.e. we have been discussing the mental model of some unknown Canadian (probably).

Aggregation

While repertory grid techniques are most often used to analyse individuals, one also can aim to aggregate individual grids per constructs and/or elements. (e.g. Bell & Vince, 2002; Harter & Erbes, 2004; Lee and Truex, 2000, Whyte & Bytheway).

In particular, in nomothetic confirmatory research, researchers try to group constructs (and maybe elements) and then perform analysis on a grid made from common elements. In order to understand individual constructs, one also can perform "laddering" or "pyramiding" interviews, i.e. ask "why" and "how" questions to the interviewees.

Coding for grouping constructs should be done by at least 2 persons using a intercoder-reliability measure.

(more needed ...)

Repertory grid analysis in specific fields

Below we summarize a few projects that used repertory grids. The purpose is reveal if few questions, methods and results that provide Daniel K. Schneider with a few ideas on how to conduct:

- studies of technologies

- studies of design

- studies of users

- studies of designers/developers behind the designs and technologies

Therefore, these little summarizes are not meant to be representative and were not done in the same way. We simply wrote down a few interesting points that may be of interest to a researcher in educational technology ....

Human resource management and development

Repertory grid techniques have been used in variety of domains to gather a picture of a set of people profile's in an organization or also to to come up with a more general set of typical profiles a job descriptions could have (e.g. managers as discussed below in the study about training needs analysis). Above, we shortly presented Steinkuehler and Derry's method for teacher assessment.

Hunter (1997) conducted an analysis of information system analysts, i.e. “to identify the qualities of what constitutes an interpretation of an `excellent' systems analyst”. A set of participants working with systems analysts was asked to identify up to six systems analysts, then a triadic elicitation method was to used to extract features (positive and negative construct poles) about ideal or incompetent analysts. Laddering (Stewart & Steward, 1981), i.e. a series of "why and "how" questions to the participant, provided more detail, i.e. more clearly defined what he/she meant by the use of the more general construct. E.g. an example (Hunter, 1997: 75) of a participant's definition of "good user rapport" included "good relationship on all subjects" (work, interests, family), "user feels more comfortable" and also behavioral observation, "how is this done?" (good listener, finds out user's interests, doesn't forget, takes time to answer user's questions, speaks in terms users can understand, etc.)

The constructs that emerged where:

- 1. Delegator -- does work himself

- 2. Informs everyone -- keeps to himself

- 3. Good user rapport -- no user rapport

- 4. Regular feedback -- appropriate feedback

- 5. Knows detail -- confused

- 6. Estimates based on staff -- estimates based on himself

- 7. User involvement -- lack of user involvement

The rating of all analysts (elements) was done on a nine point scale and that allows (if desired) to choose a different position of each analyst. Participants had to order each of a set of six analysts plus a hypothetical "ideal" one and an "incompetent" one for each construct, therefore a total of 8 cards.

A similar study has been conducted by Siau et al (2007) on important characteristics of software development team members. E.g. they found out that interpersonal/communication skills and teamwork orientation are important. But they are always considered important characteristics of team members in any project. They also found “some constructs/categories that are unique to team members of IS development projects, namely learning ability, multidimensional knowledge and professional orientation.”

Repertory grids also can be used for transformative purposes. E.g. Todd Boyle conducted a study entitled "Improving team performance using repertory grids". Their research presented “a means by which human resource managers, hiring personnel, and team leaders can easily determine essential skills needed on the IT teams of the organization, thereby deriving a "wish list" from key IT groups as to the desired non-technical characteristics of potential new team members.” Boyle used a standard triadic elicitation methods format where members of an IT group had to evaluate six programmers.

Training needs analysis

Peters (1994:23), in the context of management education, argued that “The real challenge underlying any training needs analysis (TNA) lies not with working out what training a group of individuals needs but with identifying what the good performers in that group actually do. It is only when you have a benchmark of good performance that you can look to see how everybody measures up”. Peters (1994:28) argued that use of repertory grids allows

- It provides a means to capture subjective ideas and viewpoints and it helps people to focus their views and opinions.

- It can help to probe areas and viewpoints of which managers may be unaware, and as such it can be a way of generating new managerial insights.

- It helps individual managers to understand how they view good/poor performance.

- It provides a representation of the manager's own world as it really is â and this in turn can help provide a clearer picture of how an organization is actually performing

- The technique uses real people to identify real needs [..].

- It does not seek to fit people's training needs into existing [...] training plans. As a result, what can emerge is a definition of one or more areas of real weakness within a department or organization. [..]

Design and human computer interaction

Repertory grid analysis in human-computer interaction at large seems to be quite popular, e.g. we found design studies (Hassezahl and Wessler, 2000), search engine evaluation (Crudge & Johnson, 2004), models of text (Dillen and McKnight, 1990), elicitation of knowledge for expert systems (Shaw and Gaines, 1989)

- Design of artifacts

The design problem described by Hassenzahl & Wessler was how to evaluate early prototypes made in parallel. “The user-based evaluation of artifacts in a parallel design situation requires an efficient but open method that produces data rich and concrete enough to guide design. (Hassenzahl & Wessler, 2000:453)”. Unstructured methods (e.g. interviews or observations) require a huge amount of work. On the opposite, structured methods like questionnaires is their "insensitivity to topics, thoughts, and feelings—in short, information— that do not fit into the predetermined structure." (idem, 442). “The most important advantages of the RGT are (a) its ability to gather design-relevant information, (b) its ability to illuminate important topics without the need to have a preconception of these, (c) its relative efficiency, and (d) the wide variety of types of analyses that can be applied to the gathered data. (Hasszenzahl & Wessler, 2000:455).”

- Models of Text.

Dillen and McKnight (1990:Abstract) found that “individuals construe texts in terms of three broad attributes: why read them, what type of information they contain, and how they are read. When applied to a variety of texts these attributes facilitate a classificatory system incorporating both individual and task differences and provide guidance on how their electronic versions could be designed.”

- Knowledge elicitation for expert systems

Mildred Shaw and Brian Gaines led several studies on knowledge elicitation. On particularly interesting problem was “hat experts may share only parts of their terminologies and conceptual systems. Experts may use the same term for different concepts, use different terms for the same concept, use the same term for the same concept, or use different terms and have different concepts. Moreover, clients who use an expert system have even less likelihood of sharing terms and concepts with the experts who produced it.” (Shaw & Gaines, 1989). The authors summarize the situation with the following figure.

The methodology for developing a methodology for eliciting and analyzing consensus, conflict, correspondence and contrast in a group of experts can be summarized as follows:

- The group of experts comes to an agreement over a set of entities which instantiate the relevant domain. E.g. the union of all entities that can be extracted from individual elicitations.

- Each expert individually elicits attributes and values for the agreed entities. We will then find either correspondence or contrast. All attributes of the individual grids are mapped. Does one expert have an attribute that can be used to make the same distinctions between the entities as does an other expert (correspondence) or does an attribute in one system have no matching attribute in the other (contrast).

- “In phase 3 each expert individually exchanges elicited conceptual systems with every other expert, and fills in the values for the agreed entities on the attributes used by the other experts. [...] The result is a map showing consensus when attributes with the same labels are used in the same way and conflict when they are not [..]”

- Depending on the purpose of the study, one then can for instance identify subgroups of experts who think and act in similar ways or [negotiating a common solution if there is a need for it.]

The Web

- Representation of information space

Cliff McKnight (2000) analysed the representation of information sources. Eleven sources were identified by the researcher, i.e. Library (books), E-mail, NewsGroup, Newspaper, Television, Radio, Journals (paper), Colleagues, Conferences, Journals (electronic), World Wide Web. {{quotation|These elements were presented in triads in order to elicit constructs. The triads were chosen such that no pair of elements appeared in more than one triad. [...] 10 constructs were elicited, and each element was rated on each construct as it was elicited, using a 1-5 scale. (McKnight, 2000).

The resulting repertory grid was then analysed with a two-way hierarchical cluster analysis using the FOCUS program (Shaw, 1980). This allowed an analysis of both construct clusters and element clusters and which we can't reproduce here. The authors used a 75% cutoff point to identify interesting clusters. E.g. a typical result regarding construct clusters was that “elements that are seen as "text" also have a strong tendency to be seen as "not much surfing opportunity", and as being "single focus". Similarly, elements that are seen as "quality controlled" also have a strong tendency to be seen as "historical" and "not entertaining".” Regarding element clusters, electronic journals were grouped with television and radio, while paper journals are grouped with library. e-mail and newsgroups were grouped and colleagues and conferences also. The only surprise was the association of electronic journals with radio, they score high on "entertaining" and relatively high on surfing opportunity.

- Web site Analysis

Hassenzahl and Trautmann (2000) studied the user-perceived "character" of web site designs. Ten individuals participated in the study and came from various backgrounds. The authors intended to compare an old and a new web site design with six public available banking sites. Decisive to their selection of competitors (elements) was a heterogeneous appearance according to presentation, style, structure, interaction and usability.

For construct elicitation, a classic triadic method was used: “an individual is presented with a randomly drawn triad from a number of web site designs to be compared. S/He is asked to indicate in what respect two of the three designs are similar to each other and differ from the third. This procedure leads to the production of a construct that accounts for a perceived difference. The construct is then named (e.g. playful - serious, two-dimensional - three-dimensional, ugly - attractive), the individual indicates which of the two poles is perceived as desirable (i.e. has positive value). The process is repeated until no further novel construct arises.” (p. 167). The rating was done on a 7 point scale (-3 to +3).

As a first step, they computed an appealingness value per web site by computing its mean evaluation on all constructs, i.e. the with respect to the positive poles.

A typical set of constructs that emerged were (positive poles to the left):

- functional -- playful

- broad range of products -- stock-marked focused

- specialised -- bank-like

- analytic -- customer-friendly

- for layman -- for business people

The paper then compares the most appealing vs. the least appealing design, the new design vs. the most appealing design, and the new design vs. the old design. For analysis and discussion, only the discriminating constructs were shown. Discrimination was defined as having a difference of +2 or +3 vs. -2 or -3.

- Search engine analysis

Sarah Crudge and Frences Johnson (2004) conducted an evaluation of search engines and their use with a study entitled "Using the information seeker to elicit construct models for search engine evaluation". They started with the conjecture that “from the perspective of the user engaged with the system in some information-seeking task, the range of relevant evaluation factors could be considerable”. They state that “A user's reaction to the system is composite, possibly determined by some or all of the extended range of user metrics, including ease of use and learning, task success, search results, usability of features, and aesthetics.”. Their paper explores the feasibility of deriving meaningful user evaluation criteria and measures from the users' perception of the search tools through repertory grid analysis and the authors formulated two research questions:

- 1 Is repertory grid technique a suitable method for user-centered determination of evaluative measures for Internet search engines?

- 2 What are the characteristics of the construct set that constitutes the information seeker's perception of search engines?

To facilitate comparison between the data sets of participants the authors supplied the elements, i.e. AltaVista UK, Google UK, Lycos UK, and Wisenut, with the addition of the users' perception of an ideal search engine. Also, “since not all of the selected engines were well known to all participants, a familiarization session was included before interview.”. Only four engines were selected because of the argument that a “pilot study indicated that many participants would be unable to retain a complete impression of more than four engines introduced at a session”.

For this study, there were 10 participants and 5 to 11 constructs elicited per participant with a mean of 8.4. Crudge and Johnson argued based on other studies (e.g. Hassenzahl & Trautmann, 2001 or Moynihan, 1996), that “he use of ten participants will ensure determination of the complete set of important constructs”.

Firstly, participants provided an overall rating of success for each search tool, taken along a five-point Likert scale. Then, “due to the small number of search engines used, dyadic elicitation was deemed most suitable; engines were presented in pairs, and the participant was required to state either a similarity or a difference between the members of each pair. The participant was then asked to provide the opposite of the stated similarity or difference, by consideration of the remaining engines where possible. The two polar statements together formed a construct, which was represented by a five-point scale along which all elements could be rated.”. During the interview, participants were shown a picture of the engine plus the printed result pages. Engines pairs were shown in randomized order until no new constructs were elicited. It is not clear to Daniel K. Schneider if the whole grid was completed a later state or during elicitation.

Obtained constructs were able to discriminate reasonable well over the set of search engines and also most constructs cluster or correlate with the participants' overall success ratings. Regarding research question two, this analysis was also able to identify 75 different constructs. Some additional ones were eliminated because "too far" from the next closest one.

Market research

Research and new product development in identifying attributes of products that are not self-evident (O'Cinneide, 1986)

In education

Beryl Crooks (2001) investigated what “features of mixed media distance learning materials are effective in helping Open University students to learn independently”. The authors use repertory grids to elicit student's perceptions of "guided learning" in instructional designs. More precisely, the study was conducted within a theoretical framework of Personal Construct Psychology (PCP) and activity theory. Designs were analysed at three levels:

- Guided independent learning at the 'activity' level

- Active and passive learning at the 'action level

- Specific learning tasks at the 'operation level.

Elements were "learning episodes". Constructs were were assembled in personal construct hierarchies.

In the same reader edited by Pamela Denicolo and Mareen Pope, P. Denicolo presented a study about "Images of Teaching: Reflections from Student teachers, Practicing Teachers and Teacher Educators".

Ortrun Zuber-Skerritt (1987) conducted a repertory grid study of staff and students' personal constructs of educational research. “Comparison between the computer analysed results of their pre-course grids and those of their post-course grids demonstrated considerable developmental changes in the students' perception of the scope, delineation and clearer definition of what constituted for them good, effective research.”

As cognitive tool in research

Alexander et al. (2008) introduced a different form of repository grid, “renamed a ReflectionGrid, as a collaboration tool. Members of a research team use this new technique to probe their individual understanding of what happened and what was achieved during a research event and then to share these insights. Hence, not only is the application new (reflection and construction of shared meaning rather than the analysis and synthesis of personal constructs) but the original grid technique has evolved.”. The purpose of this approach is to seek a common ground and shared meaning of a research group through critical assessment of the individual's assumptions and observations made during the research event. In particular they were confronted with 2 challenges:

- (1) How does a team of researchers collaborate on the evaluation when each team member has his/her own conceptualisation of the way in which a particular event or action should be evaluated?

- (2) How could such a collaborative process be made more structured and explicit in order to contribute to the eventual rigour of the research?

More precisely, some abstract elements involving characteristics of a workshop process were being evaluated by the individual researchers. This process was organized in four steps:

- Group Construct Elicitation, i.e. members identified and agreed upon elements and bi-polar scales together. Elements represented objectives of the action research project, as opposed to concrete elements typically used with RepGrids. Constructs represented measures that would be used in the evaluation of the elements. At a later stage 1.B a choice of elements and constructs making up the grid was made.

- Over the next weeks, team members filled in the grid

- The individual grids were aggregated into a single grid and a graphical representation showing the individual differences was made.

- “The group then met again to study the graphical presentation, compare the values assigned to each cell by the individuals and to discuss the underlying reasons for differences in perceptions.”

Abstract constructs and values used in the grid were: Creativeness (1=Very, 9=Not), Sophistication (1=Very, 9=Naive), Spontaneity (1=Very, 9=Not considered), Feasibility (1=Highly, 9=Not), Number (1=Lots, 9=Few), Expectations (1=Met, 9=Not met), Effectiveness (1=Very, 9=Minimal), Efficiency (1=Very, 9=Minimal), Scope (1=Broad, 9=Narrow), Relevance (1=High, 9=Low), Variety (1=High, 9=Low), Enthusiasm (1=High, 9=Low), Commitment (1=High, 9=Low), Change (1=Radical, 9=Unchanged), =Quality (1=High, 9=Low), Openness (1=Open, 9=Closed), Sensitivity, 1=Very 9=Not), Democratic (1=Egalitarian, 9=Autocratic), Respect (1=High, 9=Low), Awareness (1=High, 9=Low), Information (1=Formed, 9=Not formed), Knowledgibility (1=High, 9=Low), Being critical (1=High, 9=Low)

The authors argued that “Briggs et al. (2003) have identified five basic patterns of thinking that are required to move through a reasoning process, namely, Diverge, Converge, Organize, Evaluate, and Build Consensus. Convergence takes place in step 1, Divergence in step 2, Organizing particularly in Step 3 and both Evaluate and Build Consensus in Step 4.”. In other words, RepGrid technology has been successfully used for group support.

Software

Specialized software can do either or all of three things:

- Help to design repertory grids

- Help to administer repertory grids.

- Perform a series of analysis.

An alternative method is to do the first part "by hand", the second with a web-based survey manager tool and the last with a normal statistics package. Many statistics programs can do cluster analysis and component analysis. Correspondence analysis is less available. None of the specialized software below has been tested in depth (26 January 2009 - DKS)

List of Software

- Commercial

- Gridcore Correspondence analysis tool for grid data. Between euros 50 and 150.

- GridSuite. GridSuite, it is possible to elicit, process and evaluate Repertory Grid Interviews. Educational pricing is between 15 Euros (student/45 days) and 1160 (class, no limits).

- Enquire Within (free evaluation copy)

- RepGrid. From the same team that developped WebGrid. A free 15 elements/15 constructions version is available. The full version is euros 500.00.

- Point of Mind - .map software (German)

- Free

- The Idiogrid (Win) program by James W. Grice. Idiographic Analysis with Repertory Grids. Free since 2008, but users that get funding are expected to pay 105 $US.

- WinGrid (became a tool for artists).

- Omnigrid (older Mac/PC code)

Free online services

- WebGrid III and IV (free multi-purpose repertory grid online software, runs on ports 1500 and 2000).Homepage (Mildred Shaw & Brian Gaines). See the Analysis techniques section where we used this system.

- sci:vesco.web (limited version is free and fully operational).

Standards

- GridML Daniel K. Schneider doesn't know how well this Walter et al (2004) proposal is supported, but it's certainly a good initiative !

Links

Journals

- The Journal of Constructivist Psychology publishes articles on grid

Associations and centres

- European Personal Construct Association (EPCA)

- The Centre for Personal Construct Psychology (UK)

- Personal Construct Psychology Association (PCPA)

- Center for Person-Computer Studies (Mildred Shaw & Brian Gaines, of University of Calgary, both are emeritus but seem to continue their work - 18:28, 11 February 2009 (UTC)). In particular, see:

- CPCS/KSI/KSS Reports.

- WebGrid online programs (open for use by researchers). The webgrid servers also link to publications.

- PCPTOOLS List Sever (mailing list)

- Enquire Within (Valerie Stewart & John Mayes, New Zealand)

- The PCP Information Centre (Joern Scheer, Germany )

Links of links

- Jeanette Hemmecke (Forschungsseite)

- The Psychology of Personal Constructs/The Repertory Grid Technique (Jörg Scheer).

- On-line papers on PCP (good large list)

- PPC und Repertory Grid Technik

- Repertory Grid Site

- The Reprid Gateway (Jankowicz)

Short introductions

- Repertory Grid Technique (RGT)

- Repertory Grid Technique

- Repertory Grid (Wikipedia)

- Enquire Within (Kelly's Theory Summarised).

- Repertory Grid (short technical introduction of teacher assessment by Constance A. Steinkuehler & Sharon J. Derry

- How to use a repertory grid

- Repertory grid methods

- Atherton J S (2007) Learning And Teaching: Personal Construct Theory [On-line] UK: Available: http://www.learningandteaching.info/learning/personal.htm Accessed: 26 January 2009

- Repertory Grid Technique (Middlesex University)

Manuals

- Bell, Richard, The Analysis of Repertory Grid Data using SPSS, broken link/needs replacement

Some statistics links

See Research methodology resources

Bibliography

- Alexander, Patricia; Johan van Loggerenberg, Hugo Lotriet and Jackie Phahlamohlaka (2008). The Use of the Repertory Grid for Collaboration and Reflection in a Research Context, Group Decision and Negotiation, DOI 10.1007/s10726-008-9132-z

- Baldwin, Dennis A., Greene, Joel N., Plank, Richard E., Branch, George E. (1996). Compu-Grid: A Windows-Based Software Program for Repertory Grid Analysis, Educational and Psychological Measurement 56: 828-832

- Banister, P., Burman, E., Parker, I., Taylor, M.,&Tindall, C. (1994). Qualitative methods in psychology. Philadelphia: Open University Press.

- Bannister D. & Mair J.M.M. (1968) The Evaluation of Personal Constructs London: Academic Press

- Bannister D. & Fransella F. (1986) Inquiring Man: the psychology of personal constructs (3rd edition) London: Routledge .

- Bell, R. (1988): Theory-appropriate analysis of repertory grid data. International Journal of Personal Construct Psychology. 1:101-118

- Bell, R. (2000) 'Why do statistics with Repertory Grids?', The Person in Society.

- Bell, R. C. (1990). Analytic issues in the use of repertory grid technique. In G. J. Neimeyer & R. A. Neimeyer (Eds.), Advances in Personal Construct Psychology (Vol. 1, pp. 25-48). Greenwich, CT: JAI

- Bell RC & Vince J (2002) Which vary more in repertory grid data: constructs or elements? Journal of Constructivist Psycholy 15, 305-314. doi:10.1080/10720530290100514

- Boyle, T.A. (2005), "Improving team performance using repertory grids", Team Performance Management, Vol. 11 Nos. 5/6, pp. 179-187. DOI 10.1108/13527590510617756

- Briggs RO, De Vreede G-J, Nunamaker JF (2003) Collaboration engineering with thinklets to pursue sustained success with Group Support Systems. J Manag Inf Syst 19(4):31â64

- Bringmann, M. (1992). Computer-based methods for the analysis and interpretation of personal construct systems. In G. J. Neimeyer & R. A. Neimeyer (Eds.), Advances in personal construct psychology (Vol. 2, pp. 57-90). Greenwich, CN: JAI.

- Burke, M. (2001). The use of repertory grids to develop a user-driven classification of a collection of digitized photographs. Proceedings of the 64th ASIST Annual Meeting (pp. 76-92). Medford. NJ: Information Today Inc.

- Caputi, P., & Reddy, P. (1999). A comparison of triadic and dyadic methods of personal construct elicitation. Journal of Constructivist Psychology, 12(3), 253-264.

- Clarke, Steve (1999). Using Personal Construct Psychology with Pupils Who Experience Emotional and Behavioural Difficulties—A Case Study. Child Psychology and Psychiatry Review 4, 109-116. DOI:10.1017/S1360641799001987

- Crooks, Beryl (2001) The Combined Use of Personal Construct Psychology and Activity Theory as a Framework for Interpreting the Process of Independent Learning, in Denicolo, Pamela and Pope, Maureen (eds.) - Transformative Professional Practice: Personal Construct Approaches to Education and Research. London: Whurr Publications.

- Crudge, Sarah .E. and Johnson, F.C. (2007), "Using the repertory grid and laddering technique to determine the user's evaluative model of search engines", Journal of Documentation, Vol. 63 No. 2, pp. 259-280.

- Crudge, Sarah. E. and Johnson, F. C. 2004. Using the information seeker to elicit construct models for search engine evaluation. J. Am. Soc. Inf. Sci. Technol. 55, 9 (Jul. 2004), 794-806. DOI 10.1002/asi.20023.

- Denicolo, Pamela and Pope, Maureen (2001) - Transformative Professional Practice: Personal Construct Approaches to Education and Research. London: Whurr Publications.

- Dillon, A. (1994). Designing Usable Electronic Text, CRC, ISBN 0748401121, ISBN 074840113X

- Dillon, A. and McKnight, C. 1990. Towards a classification of text types: a repertory grid approach. Int. J. Man-Mach. Stud. 33, 6 (Oct. 1990), 623-636. DOI 10.1016/S0020-7373(05)80066-5

- Dunn, W.N. (1986). The policy grid: A cognitive methodology for assessing policy, dynamics. In W.N. Dunn (Ed.), Policy analysis: Perspectives, concepts and methods (pp.355-375). Greenwich, CT: JAI Press.

- Easterby-Smith, M., Thorpe, R. and Holman, D. (1996), "Using repertory grids in management", Journal of European Industrial Training, Vol. 20 No. 2, pp. 3-30.

- Fransella, Fay (2005). International Handbook of Personal Construct Psychology. Wiley. Online ISBN 9780470013373 DOI 10.1002/0470013370.app2. (you can buy individual chapters).

- Fransella, Fay; Richard Bell, Don Bannister (2003). A Manual for Repertory Grid Technique, 2nd Edition, Wiley, ISBN: 978-0-470-85489-1.

- Gaines, B. R., & Shaw, M. L. G. (1993). Eliciting Knowledge and Transferring it Effectively to a Knowledge-Based System. IEEE Transactions on Knowledge and Data Engineering 5, 4-14. (A reprint is available from Knowledge Science Institute, University of Calgary, HTML)

- Gaines, B. R., & Shaw, M. L. G. (1997). Knowledge acquisition, modelling and inference through the WorldWideWeb, International Journal of Human–Computer Studies, 46, 729–759.

- Gaines, Brian R. & Mildred L. G. Shaw (2003). Personal Construct Psychology and the Cognitive Revolution, CPCS-TR-May-03, University of Calgary. HTML (This paper includes a good introduction ot Personal Construct Psychology (PCP) and its influence on knowledge engineering). See also: the PCP home page

- Gaines, Brian R. & Mildred L. G. Shaw (2007). WebGrid Evolution through Four Generations 1994-2007, HTML

- Grice J.W. (2002). Idiogrid: Software for the management and analysis of repertory grids, Behavior Research Methods, Instruments, & Computers, Volume 34, Number 3, pp. 338-341. Abstract/PDF.

- Guillem Feixas and Jose Manuel Cornejo Alvarez (???), A Manual for the Repertory Grid, Using the GRIDCOR programme, version 4.0. HTML

- Harter SL & Erbes CR (2004) Content analysis of the personal constructs of female sexual abuse survivors elicited through repertory grid technique. J Constr Psychol 17:27-43. doi:10.1080/10720530490250679

- Hassenzahl, M., Trautmann, T. (2001), "Analysis of web sites with the repertory grid technique", Conference on Human Factors in Computing Systems 2001, 167-168. ISBN 1-58113-340-5. PDF Reprint

- Hassenzahl, Marc & Wessler, Rainer. (2000), Capturing design space from a user perspective: the Repertory Grid Technique revisited. International Journal of Human-Computer Interaction, 2000, Vol. 12 Issue 3/4, p441-459

- Hawley, Michael (2007). The Repertory Grid: Eliciting User Experience Comparisons in the Customer’s Voice, Web page, Uxmatters, HTML. (Good simple article that shows how to use one kind of grid technique to analyse web sites).

- Hemmecke, J.; Stary, C. (2007). The tacit dimension of user-tasks: Elicitation and contextual representation. Proceedings TAMODIA'06, 5th Int. Workshop on Task Models and Diagrams for User Interface Design. Springer Lecture Notes in Computer Science, LNCS 4385, pp. 308-323. Berlin, Heidelberg: Spinger

- Hemmecke, Jeannette & Christian Stary, A Framework for the Externalization of Tacit Knowledge, Embedding Repertory Grids, Proceedings of the Fifth European Conference on Organizational Knowledge, Learning, and Capabilities 2-3 April 2004, Innsbruck. PDF Preprint.

- Honey, Peter (1979). The repertory grid in action: How to use it as a pre/post test to validate courses, Industrial and Commercial Training, 11 (9), 358 - 369. DOI: DOI: 10.1108/eb003742

- Hunter, M.G. (1997). The use of RepGrids to gather interview data about information systems analysts, Information Systems Journal, Volume 7 Issue 1, Pages 67 - 81. DOI 10.1046/j.1365-2575.1997.00005.x

- Jankowicz, D. (2001), "Why does subjectivity make us nervous?: Making the tacit explicit", Journal of Intellectual Capital, Vol. 2 No. 1, pp. 61-73. DOI 10.1108/14691930110380509.

- Jankowicz, D. (2004), The Easy Guide to Repertory Grids, John Wiley & Sons Ltd, Chichester, UK. an easy introduction to grid repertory technique

- Jankowicz, Devi & Penny Dick (2001). "A social constructionist account of police culture and its influence on the representation and progression of female officers: A repertory grid analysis in a UK police force", Policing: An International Journal of Police Strategies & Management, 24 (2) pp. 181-199.

- Kelly G (1955). The Psychology of Personal Constructs New York: W W Norton.

- Latta, G.F., & Swigger, K. (1992). Validation of the repertory grid for use in modeling knowledge. Journal of the American Society for Information Science, 43(2), 115-129.

- Lee J & Truex DP (2000) Exploring the impact of formal training in ISD methods on the cognitive structure of novice information systems developers. Inf Syst J 10:347- 367. doi:10.1046/j.1365-2575.2000.00086.x

- Marsden, D. and Littler, D. (2000), "Repertory grid technique – An interpretive research framework", European Journal of Marketing, Vol. 34 No. 7, pp. 816-834.

- McKnight, Cliff (2000), The personal construction of information space, Journal of the American Society for Information Science, v.51 n.8, p.730-733, May 2000 <730::AID-ASI50>3.0.CO;2-8 DOI 10.1002/(SICI)1097-4571(2000)51:8<730::AID-ASI50>3.0.CO;2-8

- Mitterer, J. & Adams-Webber, J. (1988). OMNIGRID: A general repertory grid design, administration and analysis program. Behavior Research Methods, Instruments & Computers, 20, 359-360.

- Mitterer, J.O. & Adams-Webber, J. (1988). OMNIGRID: A program for the construction, administration and analysis of repertory grids. In J. C. Mancuso & M. L. G. Shaw (Eds.), Cognition and personal structure: Computer access and analysis (pp. 89-103). New York: Praeger.

- Moynihan, T. (1996). An inventory of personal constructs for information systems project risk researchers. Journal of Information Technology , 11, 359-371.

- Neimeyer, G. J. (1993). Constructivist assessment. Thousand Oaks: CA: Sage.

- Neimeyer, R. A. & Neimeyer, G. J. (Eds.) (2002). Advances in Personal Construct Psychology. New York: Praeger.

- Peters, W.L. (1994). Repertory Grid as a Tool for Training Needs Analysis, The Learning Organization, Vol. 1 No. 2, 1994, pp. 23-28. DOI 10.1108/09696479410060964

- Pope, Maureen and Denicolo, Pamela (2001) - Transformative Education: Personal Construct Approaches to Practice and Research. London: Whurr Publications.

- Ravenette, A.T (1997), Tom Ravenette: Selected Papers. Personal Construct Psychology and the Practice of an Educational Psychologist, EPCA Publications, Farnborough. ISBN 0-9530198-1-0.

- Ravenette, Tom (1999). Personal Construct Theory in educational psychology. A practitioner's view. London: Whurr.

- Seelig, Harald (2000). Subjektive Theorien über Laborsituationen : Methodologie und Struktur subjektiver Konstruktionen von Sportstudierenden, PhD Theses, Institut für Sport und Sportwissenschaft, Universität Freiburg. Abstract/PDF

- Sewell, K. W., Adams-Webber, J., Mitterer, J., Cromwell, R. L. (1992): Computerized repertory grids: Review of the literature. International Journal of Personal Construct Psychology. 5:1-23

- Sewell, K.W., Mitterer, J.O., Adams-Webber, J., & Cromwell, R.L. (1991). OMNIGRID-PC: A new development in computerized repertory grids. International Journal of Personal Construct Psychology , 4, 175-192.

- Shapiro, B. L. (1996). A case study of change in elementary student teacher thinking during an independent investigation in science: Learning about the "face of science that does not yet know." Science Education, 5, 535-560.

- Shaw, M.L.G. (1980). On Becoming A Personal Scientist. London: Academic Press.

- Shaw, Mildred L G & Brian R Gaines (1989). Comparing Conceptual Structures: Consensus, Conflict, Correspondence and Contrast, Knowledge Acquisition 1(4), 341-363. ( A reprint is available from Knowledge Science Institute, University of Calgary, HTML Interesting paper that discusses how to deal with different kinds of experts).

- Shaw, Mildred L G & Brian R Gaines (1992). Kelly's "Geometry of Psychological Space" and its Significance for Cognitive Modeling, The New Psychologist, 23-31, October (HTML Reprint)

- Shaw, Mildred L G & Brian R Gaines (1995) Comparing Constructions through the Web, Proceedings of CSCL95: Computer Support for Collaborative Learning (Schnase, J. L., and Cunnius, E. L., eds.), pp. 300-307. Lawrence Erlbaum, Mahwah, New Jersey. A reprint is available from Knowledge Science Institute, University of Calgary, HTML (Presents a first version of the web grid system)

- Siau, Keng, Xin Tan & Hong Sheng (2007). Important characteristics of software development team members: an empirical investigation using Repertory Grid, Information Systems Journal DOI: 10.1111/j.1365-2575.2007.00254.x

- Stein, Sarah J., Campbell J. McRobbie and Ian Ginns (1998). Insights into Preservice Primary Teachers' Thinking about Technology and Technology Education, Paper presented at the Annual Conference of the Australian Association for Research in Education, 29 November to 3 December 1998, HTML

- Stewart, V. & Stewart, A. (1981) Business Applications of Repertory Grid. McGraw-Hill, London.

- Tan, F.B., Hunter, M.G. (2002), "The repertory grid technique: a method for the study of cognition in information systems", MIS Quarterly, Vol. 26 No.1, pp.39-57. (explains various elicitation techniques)

- Walter, Otto B.; Andreas Bacher, and Martin Fromm. (2004). A proposal for a common data exchange format for repertory grid data. Journal of Constructivist Psychology, 17(3):247–251, July 2004.

- Weakley, A. J. and Edmonds E. A. 2005. Using Repertory Grid in an Assessment of Impression Formation. In Proceedings of Australasian Conference on Information Systems, Sydney 2005

- Whyte G & Bytheway A (1996) Factors affecting information systems' success. Int J Serv Ind Manag 7 (1):74-93. doi:10.1108/09564239610109429

- Zuber-Skerritt and Roche (2004). "A constructivist model for evaluating postgraduate supervision: a case study", Quality Assurance in Education, Vol. 12 No. 2, pp. 82-93, [1] (Access restricted)

- Zuber-Skerritt, Ortrun. A repertory grid study of staff and students' personal constructs of educational research, Higher Education, 16 (5) 603-623. Abstract